|

12/30/2023 0 Comments Rds postgres shardingUsing pg_stat_activity to gain insights on impact As you're doing this, one of the biggest challenges is going to be joins or any other kind of dependencies between tables. And second, they wanted to make sure these tables are isolated. They wanted to make sure that the tables that they're moving off of that big monolith database into other servers were significant portions of the workload. They were trying to assess this based on two criteria. Instead, what they wanted to do is partition the tables based on certain use cases. They decided they did not want to do horizontal sharding.

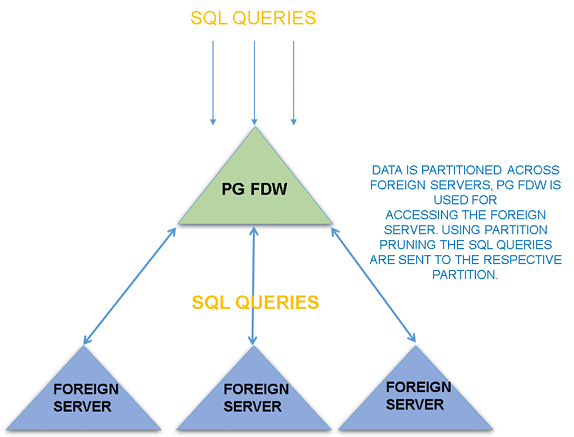

In Figma's case, they decided to do something else. Partitioning data based on certain use cases What they set up is that the same kind of schema exists in multiple locations, so you have a block, a space, and a comment, and they are on each physical database server. In Notion's case, they had one monolith and they essentially moved into, back then, 32 physical database servers. Now, Notion's approach back then was really well-described in the above linked blog post, but I think the diagram in their article summarizes it well. Garrett and his team at Notion were trying to figure out how to scale that. Notion has a similar problem where a lot of data, even more than the data in Figma's case, is stored in Postgres. Now, horizontal sharding would be closer to what Notion did a couple of years ago, where they described their lessons learned from sharding Postgres at Notion. They compare this with horizontal sharding. What they're referring to is that different tables are on different servers and so they split up the type of data on each database server. Now, what they mean with partitioning here is not the same as local table partitioning on a single node. What they instead ended up doing is they decided to partition the data. So they didn't really feel comfortable going with some of the options out there, like YugabyteDB or CockroachDB. They didn't want to be the first customers that hit certain scaling issues, they didn't want to lose control with managed solutions. And as you decide how you can scale Postgres, one of the things you consider is if you should be going to something else: Should we be going to a Postgres compatible solution that lets you just run multiple nodes?īack then in their assessment, none of the options really made sense for their situation. They were thinking about different options on how to deal with this. But in their situation, even though they made these changes, they still had limitations, because they still had everything going to that central database. All of these changes are very common and they all, in my experience, make a lot of sense as you're scaling. Lastly, they added PgBouncer as a connection pooler.

Once they had done that, they looked into creating read replicas to scale the read traffic, as well as establishing new databases for new use cases in order to not keep adding into that same central database. Read replicas and new databases for new use cases They were on a very large, very expensive r5.12xlarge, but they decided to doubled that to an r5.24xlarge – just to maximize that CPU utilization runway, because that really was the big issue. First of all, back then they still had some headroom in terms of which instance type to use. They started looking into different approaches on how to solve this.

Initially, they were not really sure how to deal with that. The problem that they encountered back then was that they observed upwards of 65% CPU utilization during peak traffic. Figma's infrastructure, in particular in regards to metadata like permissions, file information and comments, is all built on Postgres.īack then, they actually had a single large Amazon RDS database that persisted that type of metadata. In this blog post, they describe how Figma, back in 2020, hit some growing pains where they needed to figure out how to continue scaling. This is a blog post by Tim from the Figma engineering team from earlier this year. Let's dive in! How Figma scaled Postgres by partitioning tables between servers What we have discussed in this episode of 5mins of Postgres Using pg_stat_activity to gain insights on impact.Partitioning data based on certain use cases.Partitioning data in Postgres to have different tables on different servers Read replicas and new databases for new use cases.How Figma scaled Postgres by partitioning tables between servers

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed